As Artificial Intelligence (AI) continues to reshape industries by driving innovations in business intelligence, automation, and personalized experiences, it also brings along new security challenges. AI systems process enormous volumes of sensitive data, making them prime targets for cyberattacks, data breaches, and unauthorized access.

With cyber threats growing increasingly sophisticated, ensuring AI-powered security is paramount. Among the most essential security measures for safeguarding AI-driven systems are encryption and data masking—two technologies that secure sensitive information and protect against potential risks.

This article delves into the role of encryption and data masking in AI-powered security, highlighting their differences and how organizations can implement them to strengthen their cybersecurity framework.

What is AI-Powered Security?

AI-powered security leverages AI and machine learning (ML) to enhance cybersecurity by detecting, analyzing, and responding to threats in real-time. Unlike traditional security solutions, which rely on predefined rules, AI-driven systems can predict and prevent cyberattacks before they occur. Here’s a quick overview of AI-powered security:

Core Concept: AI-powered security uses AI to automate and optimize cybersecurity processes, including:

- Analyzing vast datasets to identify patterns and anomalies.

- Predicting potential threats before they materialize.

- Automating responses to known threats.

Key Aspects:

- Enhanced Threat Detection: AI algorithms can detect subtle patterns in real-time that may indicate malicious activity.

- Proactive Security: Predictive analytics allow organizations to take preventative action, strengthening defenses before attacks occur.

- Automation: Routine security tasks, such as malware scanning and vulnerability assessments, are automated to free up resources for more complex issues.

- Adaptive Learning: AI continuously learns from new threats, adapting its defenses as cyberattacks evolve.

- Behavioral Analysis: AI identifies normal system behavior and flags deviations, offering an effective solution for detecting insider threats.

In essence, AI-powered security aims to create an intelligent, responsive cybersecurity framework capable of keeping pace with increasingly complex cyber threats.

Why AI Security is Critical in Cybersecurity

With cyberattacks becoming more sophisticated and frequent, AI security is more important than ever. Here’s why:

- Evolving Threat Landscape: Cybercriminals are now using AI to develop advanced malware, making it harder for traditional security systems to keep up.

- Increased Data Volume: AI can process and analyze massive amounts of data, identifying anomalies and patterns that may go unnoticed by human analysts.

- Automation and Faster Response Times: AI automates tasks like threat detection, allowing for quicker responses to attacks and reducing the time it takes to mitigate potential damage.

- Enhanced Threat Detection and Prediction: AI’s ability to analyze historical attack data and predict future threats helps organizations stay one step ahead of cybercriminals.

- Improved Vulnerability Management: AI can prioritize vulnerabilities based on their severity, helping organizations focus on the most critical security weaknesses.

Incorporating AI into cybersecurity strategies not only enhances defenses but also allows for more efficient threat detection, faster responses, and proactive measures to prevent breaches.

AI Security Vulnerabilities: Risks You Need to Know

While AI systems provide robust security features, they also introduce unique vulnerabilities. Understanding these risks is crucial for effective AI security:

- AI Model Poisoning: Attackers manipulate the training data of AI models to induce flawed or biased predictions, potentially leading to malicious behavior.

- Adversarial Attacks: Attackers craft inputs designed to fool AI models, causing incorrect decisions or misclassifications.

- Data Privacy Concerns: AI systems process sensitive data, and mishandling this data can lead to breaches and violations of regulations like GDPR or HIPAA.

- Model Extraction: Attackers may reverse-engineer or steal AI models to gain a competitive advantage or bypass security features.

- Model Inversion: Attackers attempt to reconstruct sensitive data from the AI model’s output, compromising privacy.

- Lack of Explainability: Some AI models lack transparency, making it difficult to understand how decisions are made, which can hide vulnerabilities.

- Supply Chain Vulnerabilities: AI models rely on third-party libraries and datasets, creating potential security risks from compromised dependencies.

- Security of AI Infrastructure: If the hardware or software running AI models is compromised, the entire AI system is at risk.

Addressing these vulnerabilities is critical to maintaining secure and trustworthy AI systems.

AI Security Best Practices

To mitigate AI security risks, organizations can implement the following best practices:

- Secure AI Model Development: Ensure data integrity and model validation, conduct adversarial testing, and implement secure coding practices.

- Data Privacy and Governance: Use data minimization, anonymization, and masking techniques, and ensure compliance with data privacy regulations like GDPR.

- Robust Access Controls and Authentication: Implement role-based access control, multi-factor authentication, and conduct regular security audits.

- Continuous Monitoring and Threat Detection: Use AI-powered anomaly detection and threat intelligence integration to stay ahead of emerging threats.

- Secure AI Infrastructure: Ensure AI systems are built on secure hardware and software platforms, and deploy cloud-specific security best practices.

- Explainability and Transparency: Use explainable AI techniques to make AI decisions more transparent, and maintain detailed documentation of model development processes.

These practices help organizations build resilient AI systems that are secure, transparent, and able to adapt to new threats.

What is Encryption and How Does it Work?

Encryption is the process of converting readable data (plaintext) into an unreadable format (ciphertext) to protect it from unauthorized access. Only those with the correct decryption key can reverse the encryption and access the original data.

- Encryption Algorithm: Mathematical functions used to transform plaintext into ciphertext. Common algorithms include AES, RSA, and Triple DES.

- Symmetric Encryption: The same key is used for both encryption and decryption (e.g., AES).

- Asymmetric Encryption: A pair of keys is used—one for encryption (public key) and the other for decryption (private key) (e.g., RSA).

Encryption is crucial for securing data during transmission or storage, ensuring that sensitive information remains private.

What is Data Masking?

Data masking is the process of obscuring sensitive information by replacing it with fake, but realistic-looking data. It’s typically used in non-production environments like development or testing to protect sensitive information while maintaining the data’s structure and usability.

Static Data Masking: Changes the data permanently, so it cannot be reverted to its original state.

Dynamic Data Masking: Alters data in real-time, ensuring it remains masked as it’s accessed by users.

Data masking protects sensitive data from unauthorized access while still allowing for its use in testing or training environments.

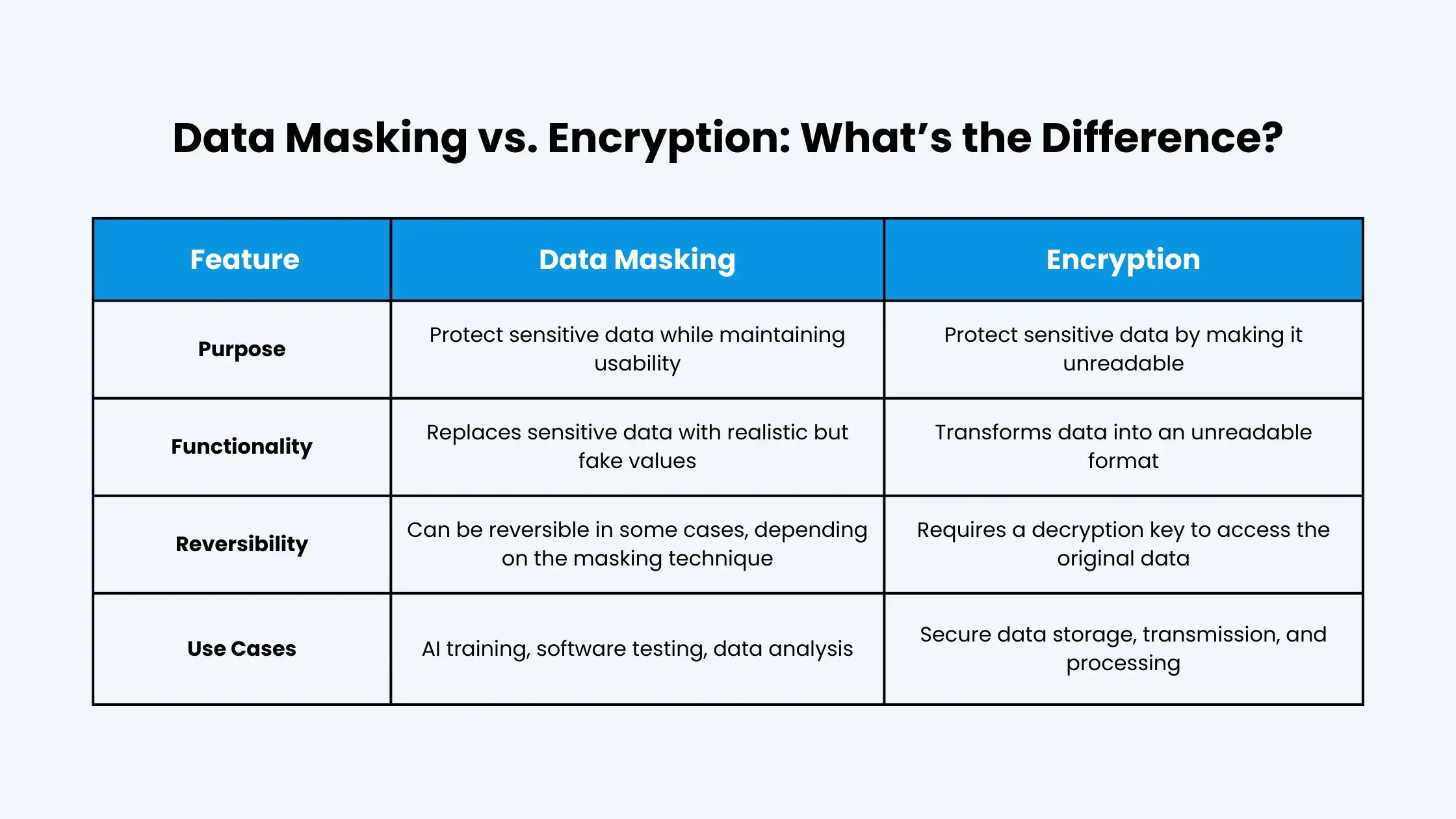

Data Masking vs. Encryption: What’s the Difference?

When discussing data security, it’s crucial to understand the distinct roles of data masking and encryption. While both serve to protect sensitive information, they do so in fundamentally different ways. Here’s a breakdown of their key differences:

Data Masking:

Purpose:

- Data masking involves replacing sensitive data with realistic, but fictional, substitutes.

- The goal is to create a functional version of the data that can be used for purposes like testing, development, and analytics, without exposing the actual sensitive information.

Functionality:

- It replaces real data with altered data, preserving the format and structure of the original.

- The altered data is designed to look and behave like real data, maintaining its usability for various applications.

Reversibility:

- Typically, data masking is not reversible.

- Once the data is masked, the original sensitive information is effectively replaced.

Use Cases:

- Commonly used in non-production environments, such as development, testing, and training, where real data is not required.

- Useful for compliance with data privacy regulations by allowing data to be used without exposing sensitive details.

Encryption:

Purpose:

- Encryption transforms data into an unreadable format (ciphertext) to protect its confidentiality.

- The goal is to prevent unauthorized access to sensitive data during storage and transmission.

Functionality:

- It uses cryptographic algorithms and keys to scramble data, rendering it unintelligible to anyone without the correct decryption key.

- The original data remains intact but is made inaccessible.

Reversibility:

- Encryption is reversible with the correct decryption key.

- Authorized users can decrypt the ciphertext to access the original data.

Use Cases:

- Essential for protecting data in transit (e.g., online transactions, email) and data at rest (e.g., stored in databases, hard drives).

- Used to secure sensitive communication and protect against data breaches.

Key Differences Summarized:

Usability:

Masked data remains usable for certain functions, while encrypted data is generally unusable until decrypted.

Reversibility:

Masking is typically irreversible, while encryption is reversible with the correct key.

Purpose:

Masking protects data in use, while encryption protects data in storage and transit.

In essence, data masking is about replacing sensitive data, while encryption is about scrambling it. Both are valuable security tools, but they serve different purposes and are used in different scenarios.

Protecting AI-Powered Applications with Data Masking and Dynamic Data Masking

- Everite Solutions specializes in implementing dynamic data masking to ensure that AI can learn from real data without compromising privacy.

- Our solutions provide granular control over data access, ensuring that only authorized users can view sensitive information.

Best Practices for AI Data Security

- Regular security audits, robust access controls, and continuous employee training are essential for maintaining AI security.

- We help organizations implement these best practices, minimizing the risk of security breaches and ensuring compliance with data privacy regulations.

Conclusion:

The risks associated with AI security are significant and cannot be ignored. Organizations must prioritize data protection and implement robust security measures to safeguard their AI systems and data.

Encryption and data masking are essential tools for protecting AI systems and data, providing a strong foundation for secure AI innovation. Partnering with experts like Everite Solutions is crucial for implementing comprehensive AI security strategies and navigating the complexities of data privacy regulations.

Explore Everite Solutions’ AI security solutions to learn how to protect your AI applications and data. Contact us today for a consultation and discover how we can help you build a secure and compliant AI future.